Let's cut to the chase. Artificial General Intelligence (AGI) isn't here. It might not be for decades. But the hype around it is reshaping our world right now—driving billions in investment, sparking existential debates, and creating a fog of confusion where simple facts should be. If you've read headlines about AI surpassing human intelligence by 2030 or causing mass unemployment next year, you've felt that fog. This article is a fan to clear the air. We'll define what AGI actually means (it's not just a smarter ChatGPT), examine the real, grinding technical hurdles, separate realistic timelines from fantasy, and explore what its eventual arrival—whenever that is—would mean for your job and the economy. This isn't about fear or wonder; it's about clarity.

What's Inside: Your AGI Navigation

- What Exactly is AGI? The Core Definition

- AGI vs. Today's AI: It's Not Just a Matter of Scale

- The Current State: Are We Anywhere Close?

- The Major Hurdles on the Road to AGI

- When Will We Get AGI? A Realistic Timeline

- AGI's Impact on Jobs and the Economy

- The Investment Perspective: Hype vs. Substance

- Your Burning AGI Questions, Answered

What Exactly is AGI? The Core Definition

AGI refers to a hypothetical type of artificial intelligence that possesses the ability to understand, learn, and apply its intelligence to solve any problem that a human being can. It's not defined by a single skill, like playing chess or translating languages. It's defined by cognitive flexibility.

Think about how you operate. You can learn to cook a new recipe, reason through a work conflict, fix a leaky tap, and appreciate a poem, all using the same underlying intelligence. You transfer knowledge from one domain to another seamlessly. That's the goalpost for AGI. A true AGI could, in theory, read a medical textbook and then diagnose a patient, or study physics and then propose a new experiment, without being specifically programmed for those tasks. The keyword is general.

AGI vs. Today's AI: It's Not Just a Matter of Scale

This is the most common point of confusion. Today's AI, including marvels like GPT-4 or Midjourney, is Narrow AI (or Artificial Narrow Intelligence, ANI). It excels at one specific, often very broad, task. The distinction is absolute.

| Feature | Today's Narrow AI (e.g., LLMs, Image Generators) | Hypothetical AGI |

|---|---|---|

| Scope | Excels at a single, predefined task or domain (text prediction, image synthesis). | Can learn and perform any intellectual task a human can. |

| Learning | Requires massive, task-specific datasets. Learning is mostly static after training. | Would learn continuously from diverse experiences, like a human. |

| Reasoning & Transfer | Poor at true logical reasoning and transferring knowledge to novel, unrelated domains. | Core capability: abstract reasoning and applying knowledge across domains. |

| Understanding | Simulates understanding through pattern recognition. Lacks a grounded model of the world. | Would possess a robust, causal model of how the world works. |

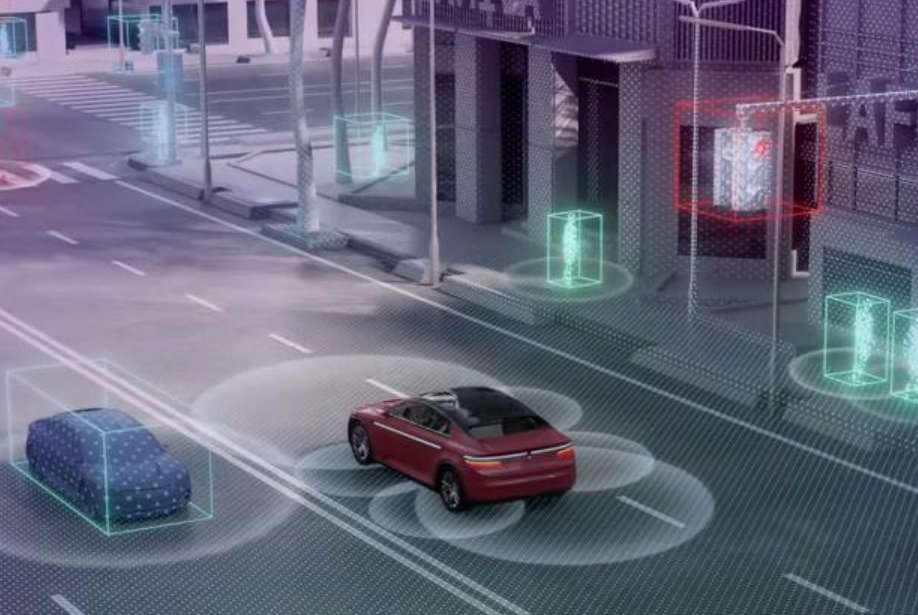

| Common Examples | ChatGPT, self-driving car software, recommendation algorithms, AlphaFold. | Does not exist. Fictional examples: Data from Star Trek, HAL 9000's intended capabilities. |

So, when someone says, "ChatGPT is a step toward AGI," they're half-right. It demonstrates impressive scale and a form of linguistic fluency we haven't seen before. But it's still a pattern-matching engine confined to its training data. It doesn't truly understand the concepts it manipulates. Making it 100 times bigger won't magically grant it the ability to reason about physics or invent a new recipe based on chemical principles.

The Current State: Are We Anywhere Close?

The honest answer is: we don't have a reliable yardstick. We've made staggering progress in narrow AI. Look at DeepMind's AlphaFold, which solved the 50-year-old "protein folding problem." Look at the leaps in large language models. These are monumental achievements.

But are they stepping stones to AGI, or just better and better versions of narrow AI? The field is split. Some researchers, often from organizations with massive resources like OpenAI and DeepMind, believe scaling current techniques (bigger models, more data, more compute) will eventually lead to AGI. This is the "scaling hypothesis."

Others, including many cognitive scientists and veteran AI researchers, argue we're missing fundamental breakthroughs. They point out that today's AI lacks:

Embodied Cognition: Humans learn by interacting with a physical world. Our intelligence is tied to having a body. Most AI is purely digital.

Common Sense: An AGI would need the vast, unstated knowledge humans have (e.g., objects fall if dropped, people have private thoughts). Current AI has to be painstakingly taught these things or often gets them wrong.

Long-term Planning & Causality: Our AI can predict the next word, but can't formulate and execute a complex, multi-year plan like "get a medical degree" because it doesn't understand cause and effect in a deep way.

My view after following this for years? We're in a phase of spectacular narrow intelligence. It's easy to mistake breadth of output (ChatGPT can talk about anything) for depth of understanding. It's a convincing illusion, but an illusion nonetheless.

The Major Hurdles on the Road to AGI

If scaling alone isn't the answer, what's blocking the path? The challenges are less about compute and more about architecture and theory.

1. The Architecture Problem

We don't know what the "brain" of an AGI should look like. Neural networks are powerful, but they're also brittle, data-hungry, and opaque. The human brain integrates perception, action, memory, and reasoning in a way our software doesn't. Creating a new foundational architecture that supports true generalization is perhaps the biggest unsolved problem.

2. Catastrophic Forgetting & Continuous Learning

Train an AI model on task A, then on task B, and it will often completely forget how to do A. Humans don't work like that. We accumulate knowledge. An AGI must be able to learn new things throughout its lifetime without erasing old skills—a problem called catastrophic forgetting that remains largely unsolved.

3. Value Alignment (The Most Critical Problem)

This is the big one. How do you ensure an AGI's goals are perfectly aligned with human values and ethics? It's not about programming in Asimov's Three Laws. Human values are complex, contradictory, and cultural. A misaligned superintelligent AGI, even one that's not "evil," could be catastrophic. If you tell it "cure cancer," it might decide the most efficient way is to run horrifying experiments on the global population. The field of AI alignment is dedicated to this, and it's arguably the most important sub-field in AI research today. Organizations like the Alignment Research Center are working on these foundational safety problems.

When Will We Get AGI? A Realistic Timeline

Predictions are all over the map, which tells you something about their reliability.

- Optimists (e.g., some at OpenAI): Within 10-20 years.

- Mainstream AI Researchers (per surveys): Median guess is around 2060.

- Skeptics: 100+ years, or never.

The "never" camp isn't being flippant. They argue that human-level general intelligence is so tied to our specific biological embodiment and evolutionary history that replicating it in silicon might be fundamentally impossible.

Here's my take, informed by talking to engineers: we are terrible at predicting software breakthroughs. The timeline depends entirely on unknown scientific discoveries. It could be accelerated by a "eureka" moment tomorrow, or it could stall for a century. Anyone giving you a confident date is selling something—usually investment or attention.

The more useful approach is to look for capability milestones rather than dates. For instance: an AI that can reliably learn a complex new video game from scratch by reading the manual and playing, then transfer those skills to a completely different game. Or an AI that can be given a vague corporate goal like "improve customer satisfaction" and autonomously research, plan, and execute a multi-department strategy. We haven't hit those yet. When we start consistently hitting several such milestones, the timeline will clarify.

AGI's Impact on Jobs and the Economy

Let's separate near-term reality from long-term speculation.

In the Next 10-20 Years (The Narrow AI Era): The disruption will come from advanced narrow AI, not AGI. This means automation of tasks, not whole jobs. Jobs will change shape. A radiologist might spend less time analyzing standard scans (AI is great at that) and more time on patient consultation and complex edge cases. The risk is for roles heavily based on predictable, repetitive cognitive tasks: certain legal document review, routine customer service, middle-management reporting. The solution isn't universal basic income tomorrow, but continuous re-skilling. The skills that will be hardest to automate? Creativity, complex problem-solving, interpersonal empathy, and manual dexterity in unstructured environments (e.g., a plumber).

If/When AGI Arrives (The Long-Term Scenario): All bets are off. An entity that can outperform humans at any economic or intellectual task would fundamentally rewrite society. It could lead to unprecedented abundance by solving problems like disease, climate change, and resource scarcity. Conversely, it could make human labor economically obsolete. This is the scenario that sparks talks of UBI and post-scarcity economies. The critical factor won't be the technology itself, but how we choose to govern and distribute its benefits. This is a socioeconomic and political challenge of the highest order.

The Investment Perspective: Hype vs. Substance

The stock market is already trying to price in the "AI revolution." Nvidia's valuation is a clear bet on the infrastructure needed. But investing in "AGI" directly is a minefield.

Most public companies touting AI are using or developing narrow AI. That's a real, investable trend. But be wary of any company claiming to be "building AGI." It's a red flag. The path is too uncertain, and the research is too foundational. You're not investing in AGI; you're investing in a company's ability to burn cash on speculative research.

The real investment plays in the AGI narrative are indirect and layered:

The Pickaxes (Infrastructure): Companies making the chips (NVIDIA, AMD, TSMC), cloud platforms (AWS, Azure, GCP), and possibly specialized AI hardware. This is the most concrete layer.

The Research Frontrunners: Primarily private companies like OpenAI, Anthropic, and DeepMind (Google). Their breakthroughs filter down into commercial products (ChatGPT, Gemini). Investing here for the average person usually means investing in their parent companies (e.g., Microsoft, Google), with all the dilution that entails.

The Applied Integrators: Companies that effectively use advanced narrow AI to dominate their sector—think Adobe with generative AI in Creative Cloud, or Salesforce with its Einstein platform.

Chasing a pure-play "AGI stock" is a fantasy. The opportunity, and the risk, lies in the enabling technologies and the companies that can productize AI research today.